BLOG List

Key Design Features of High-Precision Temperature Calibration Chambers

Key Design Features of High-Precision Temperature Calibration Chambers

High-precision temperature calibration chambers represent sophisticated engineering solutions that combine advanced thermal control, robust construction, and intelligent monitoring systems. These specialized environments achieve temperature stability within ±0.5°C fluctuation, utilizing PID-controlled refrigeration systems, multi-layered insulation, and precision platinum resistance sensors. Modern chambers integrate programmable controllers with Ethernet connectivity, enabling remote monitoring and automated calibration protocols. From aerospace component testing to pharmaceutical validation, these chambers deliver the accuracy and repeatability essential for maintaining measurement traceability across industries requiring exacting thermal specifications.

Temperature Control System Architecture and PID Algorithms

Dual-Stage Refrigeration Configuration

Advanced temperature calibration chambers employ mechanical compression refrigeration systems featuring French TECUMSEH compressors paired with environmentally responsible refrigerants. The dual-stage architecture enables chambers to achieve temperature ranges spanning from -70℃ to +150℃, accommodating diverse calibration requirements. This configuration maintains consistent cooling rates of approximately 3℃ per minute while balancing energy consumption against performance demands.

Proportional-Integral-Derivative Control Logic

The heart of thermal precision lies within PID algorithms that continuously adjust heating and cooling outputs based on real-time sensor feedback. These mathematical controllers analyze temperature deviations, calculate correction rates, and implement proportional responses that prevent oscillation around setpoints. The integration minimizes overshoot during thermal transitions, ensuring calibration accuracy remains uncompromised throughout extended testing protocols.

Nichrome Heating Element Integration

Nichrome heaters provide responsive thermal input with heating rates reaching 1℃ per minute. The resistance-based elements distribute heat uniformly across chamber volumes, working in concert with refrigeration systems to achieve rapid equilibrium. Strategic placement within air circulation pathways maximizes thermal transfer efficiency while maintaining component longevity under repeated cycling conditions.

|

Thermal Component |

Specification |

Performance Impact |

|

Compressor Type |

TECUMSEH (French) |

-70℃ minimum achievable |

|

Cooling Rate |

3℃/min |

Rapid stabilization |

|

Heating Rate |

1℃/min |

Controlled warm-up cycles |

|

Heat Load Capacity |

1000W |

Accommodates test specimens |

Insulation Materials and Thermal Shielding Technology

Polyurethane Foam Core Structure

Multi-density polyurethane foam creates the primary thermal barrier, minimizing heat exchange between chamber interiors and ambient environments. The cellular structure traps air pockets that dramatically reduce thermal conductivity, enabling temperature calibration chambers to maintain extreme temperatures with manageable energy input. Foam thickness varies across chamber models, correlating directly with achievable temperature ranges and stability specifications.

Insulation Cotton Supplementary Layers

Beyond polyurethane foundations, specialized insulation cotton provides secondary thermal protection in critical zones. This fibrous material absorbs residual heat transfer, particularly around access points and structural joints where thermal bridging risks emerge. The combination creates redundant protection that ensures temperature deviation remains within ±2.0℃ across entire chamber volumes.

Double-Layer Window Sealing Systems

Observation windows incorporate double-layer thermostability silicone rubber seals that maintain visual access without compromising thermal integrity. The silicone composition withstands extreme temperature cycling while maintaining elastic properties that ensure airtight closure. Interior lighting systems integrate without creating thermal weak points, enabling specimen monitoring throughout calibration procedures.

Chamber Construction and Air Circulation Design

Stainless Steel Interior Configuration

SUS304 stainless steel interiors provide corrosion-resistant surfaces that withstand condensation, thermal stress, and chemical exposure from test specimens. The non-reactive material prevents contamination while facilitating cleaning protocols between calibration runs. Welded construction eliminates crevices that could trap moisture or compromise thermal uniformity across working volumes ranging from 100L to 1000L.

Centrifugal Wind Fan Circulation

Strategic air circulation patterns utilize centrifugal wind fans to eliminate thermal stratification within chambers. The forced convection continuously moves conditioned air across specimens, ensuring uniform temperature exposure regardless of shelf position. Adjustable-height shelving with perforated surfaces promotes airflow penetration, enabling simultaneous calibration of multiple sensors with consistent thermal exposure.

Protective Exterior Coating Systems

Steel plate exteriors receive specialized protective coatings that resist environmental corrosion while providing structural rigidity. The multi-layer finish withstands industrial environments where temperature calibration chambers operate continuously, protecting internal insulation and control systems from humidity and chemical exposure. Powder-coat applications ensure long-term aesthetic quality alongside functional durability.

|

Construction Element |

Material |

Purpose |

|

Interior Walls |

SUS304 Stainless |

Corrosion resistance |

|

Insulation |

Polyurethane foam |

Thermal barrier |

|

Exterior Shell |

Coated steel plate |

Structural integrity |

|

Shelving |

Perforated steel |

Air circulation |

Sensor Ports, Calibration Wells, and Access Interfaces

PT-100 Class A Platinum Resistance Sensors

Temperature measurement relies on PT-100 Class A platinum resistance thermometers that detect thermal changes at 0.001-degree resolution. The platinum elements exhibit predictable resistance variations across wide temperature ranges, providing reference accuracy essential for calibration traceability. Sensor positioning throughout chamber volumes enables multi-point verification of thermal uniformity during validation protocols.

Standard Cable Port Configuration

Each chamber includes standard cable ports with Φ50mm diameter openings equipped with sealing plugs. These passages accommodate thermocouple wires, power supplies, and data cables for specimens under test without compromising chamber integrity. The grommet design maintains thermal sealing while enabling external connections critical for device-under-test operation during calibration procedures.

Adjustable Shelf Access Systems

Interior configurations feature removable shelving systems with height-adjustable mounting positions. This flexibility accommodates specimens of varying dimensions while maintaining optimal air circulation patterns. The shelf design supports heat load distributions up to 1000W, enabling calibration of active devices that generate thermal output during operation.

Data Logging, Connectivity, and Software Integration

Programmable LCD Touchscreen Controllers

Modern temperature calibration chambers incorporate color LCD touchscreen interfaces that simplify programming complex thermal profiles. The intuitive controls enable operators to define multi-segment temperature ramps, hold periods, and cycling patterns without specialized training. Real-time graphical displays present chamber status, providing immediate feedback on thermal performance and deviation alerts.

Ethernet Network Integration

Built-in Ethernet connectivity transforms chambers into networked assets within laboratory management systems. The PC Link capability enables remote monitoring, automated data extraction, and integration with calibration management software. Network access supports compliance documentation requirements, automatically generating timestamped temperature records for regulatory submissions.

Automated Profile Execution

Programmable controllers execute stored thermal profiles with precise timing and temperature control. The automation eliminates manual intervention during extended calibration sequences, reducing operator errors while ensuring repeatability across multiple calibration events. Profile libraries accommodate industry-standard calibration protocols, accelerating validation procedures.

|

Connectivity Feature |

Capability |

Benefit |

|

Touchscreen Interface |

Profile programming |

Simplified operation |

|

Ethernet Access |

Remote monitoring |

Real-time oversight |

|

Data Logging |

Automated records |

Compliance documentation |

|

PC Link |

Software integration |

System connectivity |

Safety Mechanisms and Energy Efficiency Considerations

Multi-Layer Protection Systems

Comprehensive safety architecture includes over-temperature protection that halts heating operations when thermal limits exceed programmed thresholds. Over-current protection safeguards electrical components from power surges, while refrigerant high-pressure protection prevents compressor damage. Earth leakage protection ensures operator safety, particularly critical when chambers operate with lithium-ion battery specimens that present unique hazards.

Thermal Management Optimization

Energy efficiency emerges from intelligent thermal management that minimizes heating and cooling conflicts. The control systems coordinate compressor operation with heater activation, avoiding simultaneous opposing actions that waste energy. Insulation quality directly impacts operational costs, with superior thermal barriers reducing the work required to maintain extreme temperatures.

Lithium-Ion Battery Testing Safeguards

Specialized safety options address risks associated with lithium-ion battery calibration and testing. These provisions include pressure relief mechanisms, flame-resistant interior materials, and enhanced ventilation systems that manage thermal runaway scenarios. The safety enhancements enable battery manufacturers to conduct essential validation procedures with reduced facility risks.

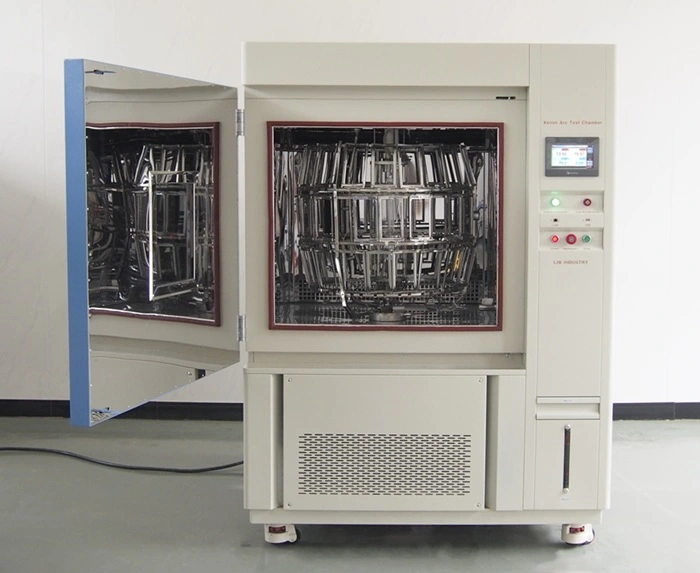

LIB Industry Temperature Calibration Chamber: Precision Engineered Design

Comprehensive Model Range

LIB Industry offers temperature calibration chambers spanning from compact 100L models (T-100) to expansive 1000L configurations (T-1000). The range addresses diverse laboratory footprints while maintaining consistent performance specifications across the product line. Overall dimensions scale proportionally, with the largest model measuring 1500mm × 1540mm × 2140mm, accommodating substantial specimen volumes.

Temperature Range Versatility

Three distinct temperature range options serve varying calibration requirements. Range A (-20℃ to +150℃) addresses standard industrial applications, while Range B (-40℃ to +150℃) extends capability for cold-climate component validation. Range C (-70℃ to +150℃) provides extreme low-temperature capability essential for aerospace and specialty applications requiring cryogenic calibration points.

Turn-Key Solution Integration

Beyond equipment supply, LIB Industry provides comprehensive support encompassing research, design, production, commissioning, delivery, installation, and operator training. This turn-key approach ensures chambers integrate seamlessly into existing calibration workflows, minimizing implementation timelines. Technical support extends throughout equipment lifecycles, maintaining calibration accuracy through preventive maintenance and periodic revalidation services.

Application Across Industries

Temperature calibration chambers serve critical roles in electronics manufacturing, pharmaceutical validation, aerospace component qualification, and research laboratories. Electronics manufacturers verify sensor accuracy for consumer devices, while pharmaceutical companies validate environmental monitoring systems ensuring product integrity. Aerospace applications demand extreme temperature performance for component qualification under operational conditions, making high-precision chambers indispensable tools.

|

LIB Chamber Model |

Internal Volume |

Overall Dimensions |

Ideal Applications |

|

T-100 |

100L |

900×1050×1620mm |

Small component testing |

|

T-225 |

225L |

1000×1140×1870mm |

Sensor calibration |

|

T-500 |

500L |

1200×1340×2020mm |

Multi-device validation |

|

T-800 |

800L |

1300×1540×2120mm |

Battery testing |

|

T-1000 |

1000L |

1500×1540×2140mm |

Large specimen qualification |

Conclusion

High-precision temperature calibration chambers embody sophisticated engineering that merges thermal control systems, robust construction, and intelligent monitoring capabilities. The integration of PID algorithms, platinum resistance sensors, and mechanical refrigeration creates environments achieving ±0.5℃ stability across extreme temperature ranges. Strategic insulation, forced air circulation, and stainless steel construction ensure long-term reliability while programmable controllers with network connectivity streamline calibration workflows. These design features collectively enable measurement traceability essential for quality assurance across electronics, pharmaceutical, aerospace, and research applications.

FAQs

What temperature uniformity can high-precision calibration chambers achieve?

Modern temperature calibration chambers maintain temperature deviation within ±2.0℃ across the working volume with fluctuation controlled to ±0.5℃ at setpoint. This uniformity results from forced air circulation, strategic insulation placement, and PID-controlled heating and cooling systems that continuously balance thermal inputs throughout the chamber interior.

How do PT-100 sensors contribute to calibration accuracy?

PT-100 Class A platinum resistance sensors provide reference-grade temperature measurement with 0.001-degree resolution. The platinum element's predictable resistance change with temperature enables traceable calibration across wide ranges. Multiple sensor placement throughout chambers verifies thermal uniformity, ensuring specimens receive consistent exposure regardless of position during calibration procedures.

What safety features protect lithium-ion battery testing?

Specialized chambers for lithium-ion battery calibration incorporate enhanced safety mechanisms including over-temperature protection, pressure relief systems, flame-resistant interior materials, and advanced ventilation. These provisions manage thermal runaway risks while enabling essential battery validation procedures, protecting both equipment and facility from potential hazards associated with energetic cell testing.

As a leading temperature calibration chamber manufacturer and supplier, LIB Industry delivers precision-engineered environmental testing equipment with comprehensive turn-key support. Contact our technical team at ellen@lib-industry.com to discuss your specific calibration requirements and explore customized chamber configurations for your facility.

How to Select the Best Calibration Chamber for Temperature Sensors?

How to Select the Best Calibration Chamber for Temperature Sensors?

Selecting the right temperature calibration chamber requires careful evaluation of technical specifications, operational requirements, and long-term reliability. The ideal chamber combines precise temperature control, excellent uniformity, and appropriate sensor compatibility to deliver accurate calibration results. Consider your specific application needs - whether electronics, pharmaceuticals, aerospace, or research - alongside essential features like programmable controls, safety mechanisms, and compliance with international standards. Budget constraints, available space, and manufacturer support also play crucial roles in making an informed decision that ensures measurement accuracy and operational

efficiency.

What Factors Define a High-Performance Calibration Chamber?

Precision Control Systems

High-performance chambers incorporate advanced control systems that maintain temperature accuracy within tight tolerances. Modern programmable touchscreen controllers with Ethernet connectivity enable real-time monitoring and data logging. The PT-100 Class A sensor technology detects temperature changes at 0.001-degree precision, ensuring reliable measurements throughout the calibration process. These sophisticated systems eliminate manual errors and provide consistent performance across extended operational periods.

Chamber Construction Quality

Superior construction directly impacts calibration accuracy and longevity. Quality chambers utilize SUS304 stainless steel interiors resistant to corrosion and contamination. Polyurethane foam combined with insulation cotton provides excellent thermal retention, minimizing external temperature influences. Double-layer thermostability silicone rubber sealing around observation windows prevents heat leakage while maintaining visibility during testing procedures.

Mechanical Refrigeration Efficiency

Environmental-friendly refrigeration systems using TECUMSEH compressors deliver rapid cooling rates up to 3°C per minute. Efficient refrigeration cycles enable quick temperature transitions between calibration points, reducing overall testing time. Refrigerant high-pressure protection and over-current safety devices prevent equipment damage during intensive operations, extending the temperature calibration chamber's operational lifespan while maintaining consistent performance.

Evaluating Temperature Range, Stability, and Uniformity

Understanding Required Temperature Span

Different applications demand specific temperature ranges. Basic calibration needs might require -20°C to +150°C, while specialized aerospace or cryogenic applications necessitate extremes reaching -70°C. Selecting chambers with appropriate range prevents unnecessary investment in excessive capabilities. Consider both current requirements and potential future applications when determining the optimal temperature span for your facility.

Temperature Stability Metrics

Temperature fluctuation represents short-term variations around the setpoint, typically measured as ±0.5°C in quality chambers. Stability affects calibration uncertainty calculations and determines confidence levels in measurement results. Chambers with minimal fluctuation reduce calibration time and improve repeatability. Deviation measurements of ±2.0°C across the working volume indicate acceptable uniformity for most industrial calibration applications.

|

Performance Parameter |

Specification |

Impact on Calibration |

|

Temperature Fluctuation |

±0.5°C |

Short-term stability |

|

Temperature Deviation |

±2.0°C |

Spatial uniformity |

|

Cooling Rate |

3°C/min |

Efficiency |

|

Heating Rate |

1°C/min |

Process speed |

Spatial Uniformity Assessment

Temperature uniformity throughout the working volume determines calibration quality. Centrifugal wind fans ensure consistent air circulation, eliminating hot or cold spots. Adjustable shelving promotes optimal airflow around sensors during calibration. Chambers should demonstrate uniform temperature distribution documented through standardized mapping procedures, confirming suitability for multi-point calibration workflows.

Sensor Compatibility and Mounting Configurations

Platinum Resistance Thermometer Integration

PT100 sensors remain the industry standard for temperature calibration due to excellent linearity and stability. Chamber designs incorporating PTR Platinum Resistance PT100Ω/MV A-class sensors provide reference-grade accuracy. Verify compatibility between your existing sensor types and chamber interface options. Some applications require simultaneous calibration of multiple sensor formats, necessitating versatile mounting solutions.

Thermocouple Accommodation

Many industrial processes employ thermocouples for temperature measurement. Temperature calibration chambers must accommodate various thermocouple types - K, J, T, E, and others - with appropriate connection terminals. Cable holes with adjustable plugs facilitate external connections while maintaining chamber integrity. Standard configurations typically include 50mm diameter cable ports suitable for most instrumentation wiring requirements.

Custom Mounting Solutions

Removable and adjustable shelving allows flexible sensor positioning within the chamber. Applications involving large sensors or multiple simultaneous calibrations benefit from customizable rack systems. Mounting configurations should prevent mutual interference between sensors while ensuring adequate exposure to controlled atmosphere. Consult manufacturers about specialized fixtures matching your specific calibration protocols and sensor geometries.

Calibration Standards: ISO/IEC 17025 and ITS-90 Compliance

ISO/IEC 17025 Requirements

Accredited calibration laboratories must demonstrate competence through ISO/IEC 17025 compliance. Temperature chambers supporting accreditation require documented traceability, measurement uncertainty calculations, and environmental monitoring capabilities. Equipment qualification protocols include installation qualification, operational qualification, and performance qualification. Chambers with integrated data logging and network connectivity simplify documentation requirements for audit trails.

International Temperature Scale Alignment

The International Temperature Scale of 1990 (ITS-90) defines modern temperature measurement standards. Calibration chambers must achieve reference points traceable to national metrology institutes. Fixed-point cells or certified reference thermometers establish traceability chains. Understanding ITS-90 requirements ensures calibration results gain international recognition and acceptance across borders and industries.

|

Standard |

Application Scope |

Key Requirements |

|

ISO/IEC 17025 |

Laboratory competence |

Traceability, documentation, quality management |

|

ITS-90 |

Temperature definition |

Reference points, measurement hierarchy |

|

NIST Guidelines |

US traceability |

Calibration procedures, uncertainty analysis |

Documentation and Validation

Comprehensive validation packages should accompany chamber purchases. Temperature mapping reports, uncertainty budgets, and calibration certificates demonstrate performance compliance. Regular recalibration intervals - typically annually - maintain accuracy over time. Manufacturers providing validation services streamline compliance processes and reduce operational burdens on calibration laboratories.

Comparing Manual and Automated Calibration Systems

Manual Calibration Advantages

Manual systems offer flexibility and lower initial investment costs. Operators control temperature setpoints directly through touchscreen interfaces, adjusting parameters based on real-time observations. This approach suits laboratories performing occasional calibrations or requiring customized test protocols. Manual operation develops operator expertise and provides intimate understanding of chamber characteristics and sensor behavior.

Automated System Benefits

Automated calibration systems execute preprogrammed sequences with minimal supervision. Programmable controllers cycle through multiple temperature points, recording data automatically. Automation reduces human error, increases throughput, and enables overnight operations. Network connectivity allows remote monitoring and control through computer interfaces. Laboratories processing high volumes benefit significantly from automation investments despite higher upfront costs.

Hybrid Approaches

Many modern temperature calibration chambers combine manual flexibility with automated capabilities. Operators can switch between modes depending on application requirements. Stored programs handle routine calibrations while manual override accommodates specialized tests. This versatility maximizes equipment utilization across diverse calibration portfolios. Evaluate workflow patterns when selecting between pure manual, automated, or hybrid configurations.

Choosing Reliable Manufacturers and After-Sales Support

Manufacturer Reputation Assessment

Established manufacturers demonstrate proven track records through customer testimonials and industry presence. Research company history, technical expertise, and quality certifications. Manufacturers specializing in environmental testing equipment typically offer deeper knowledge than generalist suppliers. Review case studies showing successful installations in applications similar to yours, validating manufacturer capabilities.

Technical Support Infrastructure

Comprehensive technical support extends beyond initial installation. Responsive customer service teams address operational questions and troubleshooting needs. Manufacturers should provide detailed operation manuals, maintenance schedules, and spare parts availability information. Training programs for operators and maintenance personnel ensure optimal equipment utilization and longevity. Verify support availability during your operational hours.

Warranty and Service Agreements

Standard warranties typically cover one year from installation, protecting against manufacturing defects. Extended service agreements provide peace of mind for critical applications. Clarify coverage details including parts, labor, and response times. On-site service capability proves valuable for complex repairs requiring specialized knowledge. Compare warranty terms across manufacturers when evaluating similar equipment specifications.

|

Support Aspect |

Evaluation Criteria |

Business Impact |

|

Technical Assistance |

Response time, expertise depth |

Minimizes downtime |

|

Spare Parts |

Availability, delivery speed |

Operational continuity |

|

Training |

Comprehensiveness, accessibility |

Performance optimization |

|

Warranty Coverage |

Duration, inclusions |

Cost management |

LIB Industry Temperature Calibration Chamber: Optimal Sensor Testing Choice

Product Range Overview

LIB Industry offers comprehensive temperature calibration solutions spanning 100L to 1000L internal volumes. Models accommodate diverse laboratory space constraints while providing consistent performance characteristics. Temperature ranges from -20°C to -70°C lower limits support applications from general industrial calibration to specialized cryogenic requirements. The 1000W heat load capacity handles substantial thermal demands during intensive testing protocols.

Advanced Control Technology

Programmable color LCD touchscreen controllers simplify operation through intuitive interfaces. Ethernet connectivity enables seamless integration into laboratory management systems and remote monitoring platforms. PC Link functionality supports data export for analysis and reporting requirements. Real-time temperature tracking with PT-100 Class A sensors ensures calibration accuracy meeting stringent metrology standards across all operational conditions.

Safety and Reliability Features

Comprehensive safety systems protect equipment and personnel during operations. Over-temperature protection prevents thermal runaway conditions, while earth leakage protection guards against electrical hazards. Refrigerant high-pressure monitoring prevents compressor damage during extreme cooling demands. These integrated safety mechanisms reduce maintenance requirements and extend operational lifespan, maximizing return on investment for calibration facilities.

Customization Capabilities

LIB Industry provides turn-key solutions encompassing design, production, commissioning, installation, and training. Custom configurations address unique application requirements beyond standard specifications. Technical consultation services help laboratories optimize chamber selection for specific calibration portfolios. This comprehensive approach ensures equipment perfectly matches operational needs while meeting budget constraints and space limitations.

Conclusion

Selecting the optimal temperature calibration chamber demands thorough evaluation of technical specifications, operational requirements, and manufacturer support. Prioritize chambers offering appropriate temperature range, excellent stability, and sensor compatibility matching your calibration portfolio. Ensure compliance with relevant standards while balancing manual flexibility against automation benefits. Partner with experienced manufacturers providing comprehensive support throughout equipment lifecycle. Investing time in proper selection yields accurate calibrations, operational efficiency, and long-term reliability supporting quality measurement programs.

FAQs

What temperature range should I select for general industrial sensor calibration?

Most industrial applications require -20°C to +150°C range, covering typical process measurement needs. Specialized applications like aerospace or cryogenics may necessitate extended ranges to -70°C. Evaluate your current sensor inventory and anticipated future requirements before finalizing specifications.

How often should temperature calibration chambers undergo recalibration?

Annual recalibration intervals satisfy most quality system requirements and maintain ISO/IEC 17025 accreditation. Critical applications or extreme environmental conditions may warrant semi-annual verification. Consult your quality management procedures and regulatory requirements for specific guidance.

Can automated calibration systems accommodate different sensor types simultaneously?

Advanced automated systems support multiple sensor types through programmable configurations and versatile mounting solutions. Chambers with adequate internal volume and flexible cabling provisions enable concurrent calibration of diverse sensors. Confirm compatibility specifications with manufacturers matching your multi-sensor calibration protocols.

Ready to enhance your temperature calibration capabilities? LIB Industry, a leading temperature calibration chamber manufacturer and supplier, delivers precision environmental testing solutions worldwide.

Contact our technical team Today.

How Temperature Calibration Chambers Ensure Measurement Accuracy?

How Temperature Calibration Chambers Ensure Measurement Accuracy?

Temperature calibration chambers maintain measurement accuracy through precise environmental control, using advanced refrigeration systems, platinum resistance sensors, and programmable controllers to create stable thermal conditions. These specialized enclosures eliminate temperature fluctuations and deviations, ensuring instruments undergo verification under reproducible conditions. By combining mechanical compression cooling, nichrome heating elements, and centrifugal circulation fans, calibration chambers achieve uniform thermal distribution across the testing volume. This controlled environment enables metrological traceability, reduces measurement uncertainty, and verifies sensor performance against known standards - ultimately safeguarding quality assurance protocols across industries where temperature-dependent processes demand absolute precision.

Why Temperature Calibration Is Essential for Reliable Measurements?

Preventing Measurement Drift in Critical Applications

Uncalibrated temperature sensors gradually lose accuracy over time due to thermal cycling, mechanical stress, and environmental exposure. This phenomenon, known as measurement drift, can compromise product quality in pharmaceutical manufacturing, aerospace testing, and electronics production. Regular verification using calibration chambers establishes baseline performance, identifying sensors that have drifted beyond acceptable tolerances before they impact production outcomes or safety protocols.

Establishing Metrological Traceability

Traceability connects measurement results to international standards through an unbroken chain of comparisons. Temperature calibration chambers serve as reference environments where secondary standards undergo verification against primary standards. This hierarchical system ensures that temperature measurements anywhere in the supply chain trace back to nationally recognized metrology institutes, satisfying regulatory requirements and quality management system audits.

Economic Impact of Measurement Errors

Temperature measurement failures cost industries millions annually through rejected batches, equipment damage, and warranty claims. A pharmaceutical manufacturer losing a single vaccine batch due to storage temperature deviation may face losses exceeding hundreds of thousands of dollars. Calibration chambers prevent these scenarios by validating monitoring equipment before deployment, reducing risk exposure and protecting revenue streams.

Principles of Operation in Temperature Calibration Chambers

Thermal Equilibrium and Stability Requirements

Achieving thermal equilibrium requires balancing heat input, removal, and distribution throughout the chamber volume. The system reaches stability when temperature variation falls within specified limits - typically ±0.5°C fluctuation and ±2.0°C deviation across the workspace. This stability depends on insulation effectiveness, air circulation patterns, and control system response time. Polyurethane foam combined with insulation cotton minimizes heat exchange with ambient conditions, while centrifugal fans maintain uniform temperature distribution.

Mechanical Compression Refrigeration Systems

Modern temperature calibration chambers employ cascade refrigeration or dual-stage compression to achieve extreme low temperatures. French TECUMSEH compressors use environmentally friendly refrigerants that undergo phase changes to absorb heat from the chamber interior. The refrigeration cycle includes compression, condensation, expansion, and evaporation stages, with each cycle extracting thermal energy. Cooling rates typically reach 3°C per minute, enabling rapid transitions between calibration points across temperature ranges spanning -70°C to +150°C.

Heating Element Configuration

Nichrome wire heating elements provide precise thermal energy input, offering rapid response times and excellent temperature uniformity. These resistive heaters convert electrical current into thermal energy with minimal overshoot, achieving heating rates around 1°C per minute. The controlled heating rate prevents thermal shock to test specimens while maintaining chamber stability. Strategic placement of heating elements throughout the chamber ensures even heat distribution, eliminating cold spots that could compromise calibration accuracy.

Factors Affecting Thermal Stability and Accuracy

Air Circulation and Stratification Control

Temperature stratification - where warmer air accumulates at upper levels while cooler air settles below - threatens measurement uniformity. Centrifugal wind fans create forced convection patterns that homogenize the thermal environment. Fan placement, blade design, and rotation speed all influence circulation effectiveness. Proper airflow prevents stagnant zones and ensures that sensors experience identical thermal conditions regardless of their position within the workspace.

Insulation Material Properties

Thermal insulation determines how effectively chambers maintain setpoint temperatures against ambient heat transfer. Materials with low thermal conductivity - such as polyurethane foam - create barriers that reduce energy consumption and improve temperature stability. Insulation thickness, joint sealing, and door gasket integrity all contribute to overall thermal performance. Double-layer thermostable silicone rubber seals on observation windows prevent heat leakage while allowing visual monitoring.

Sensor Response Time and Placement

PT-100 Class A platinum resistance thermometers detect temperature changes at 0.001-degree resolution, providing real-time feedback to control systems. Sensor placement near the geometric center of the workspace samples the most representative temperature, while additional sensors at corners verify uniformity. Response time - the interval required for sensors to register 63.2% of a step change - affects control loop performance and stability.

|

Parameter |

Specification |

Impact on Accuracy |

|

Temperature Fluctuation |

±0.5°C |

Defines short-term stability and measurement repeatability |

|

Temperature Deviation |

±2.0°C |

Indicates spatial uniformity across the entire workspace |

|

Cooling Rate |

3°C/min |

Determines transition speed between calibration points |

|

Heating Rate |

1°C/min |

Controls thermal shock and prevents overshoot conditions |

|

Sensor Resolution |

0.001°C |

Establishes the finest detectable temperature change |

Role of Temperature Sensors and Control Systems

Platinum Resistance Thermometry Technology

PT-100 sensors exploit the predictable relationship between platinum resistance and temperature, offering exceptional linearity and long-term stability. The 100-ohm nominal resistance at 0°C increases approximately 0.385 ohms per degree Celsius. Class A sensors provide accuracy within ±0.15°C at 0°C, tightening tolerance requirements compared to Class B alternatives. This precision makes platinum resistance thermometers the preferred choice for calibration reference measurements.

Programmable Control Algorithms

Modern temperature calibration chambers' programmable color LCD touchscreen controllers implement proportional-integral-derivative (PID) algorithms that continuously adjust heating and cooling outputs. The proportional component responds to current error magnitude, the integral component addresses accumulated error over time, and the derivative component anticipates future trends. This three-part control strategy minimizes oscillation while maintaining tight setpoint adherence. Ethernet connectivity enables remote monitoring and data logging for compliance documentation.

Feedback Loop Optimization

Control system performance depends on proper tuning of gain parameters, update rates, and hysteresis bands. Aggressive tuning achieves rapid response but risks instability, while conservative tuning sacrifices speed for guaranteed stability. Optimal tuning balances these competing objectives, considering thermal mass, insulation properties, and refrigeration capacity. Well-optimized systems reach setpoint quickly without overshoot and maintain stability despite load changes.

|

Chamber Model |

Internal Dimensions (mm) |

Volume (L) |

Temperature Range |

|

T-100 |

400×500×500 |

100 |

-20°C to +150°C (A) / -40°C to +150°C (B) / -70°C to +150°C (C) |

|

T-225 |

500×600×750 |

225 |

-20°C to +150°C (A) / -40°C to +150°C (B) / -70°C to +150°C (C) |

|

T-500 |

700×800×900 |

500 |

-20°C to +150°C (A) / -40°C to +150°C (B) / -70°C to +150°C (C) |

|

T-800 |

800×1000×1000 |

800 |

-20°C to +150°C (A) / -40°C to +150°C (B) / -70°C to +150°C (C) |

|

T-1000 |

1000×1000×1000 |

1000 |

-20°C to +150°C (A) / -40°C to +150°C (B) / -70°C to +150°C (C) |

Improving Measurement Confidence Through Regular Chamber Calibration

Establishing Calibration Intervals

Calibration frequency depends on usage intensity, environmental conditions, and accuracy requirements. Laboratories performing daily measurements may require quarterly verification, while occasional users might extend intervals to annual cycles. Risk-based approaches consider the consequences of measurement failure, assigning shorter intervals to applications where errors have severe implications. Documentation of historical performance helps organizations optimize intervals, balancing cost against risk.

Documentation and Traceability Records

Comprehensive calibration certificates document reference standards used, environmental conditions, measurement results, and expanded uncertainty calculations. These records demonstrate compliance with ISO 17025 and industry-specific regulations. Certificate retention periods typically span five to ten years, supporting audits and investigations. Electronic record-keeping systems with secure timestamps and digital signatures prevent unauthorized modifications while improving accessibility.

Uncertainty Budget Development

Measurement uncertainty quantifies the doubt surrounding calibration results, encompassing contributions from reference standards, resolution limits, stability variations, and environmental influences. Uncertainty budgets combine these sources using statistical methods, expressing total uncertainty as a coverage interval with specified confidence level. Understanding uncertainty helps users determine whether measurement systems satisfy application requirements and identify opportunities for improvement.

|

Uncertainty Source |

Typical Contribution |

Mitigation Strategy |

|

Reference Standard |

±0.05°C to ±0.15°C |

Use higher-accuracy standards with valid certificates |

|

Chamber Stability |

±0.25°C to ±0.5°C |

Improve insulation and optimize control algorithms |

|

Sensor Resolution |

±0.001°C |

Select high-resolution instrumentation |

|

Loading Effects |

±0.1°C to ±0.3°C |

Minimize thermal mass and allow equilibration time |

|

Spatial Uniformity |

±1.0°C to ±2.0°C |

Enhance air circulation and verify uniformity mapping |

LIB Industry Temperature Calibration Chamber: Guaranteed Precision Performance

Advanced Safety Features for Battery Testing

LIB temperature calibration chambers incorporate specialized safety options designed for lithium-ion battery testing, addressing fire and explosion risks inherent in electrochemical energy storage devices. Over-temperature protection activates emergency cooling when chamber temperature exceeds safe limits. Over-current protection prevents electrical system damage during fault conditions. Refrigerant high-pressure protection safeguards compressors from destructive pressure buildup. Earth leakage protection detects insulation failures that could create electrocution hazards. These interlocking safety systems enable confident testing of potentially hazardous materials.

Customizable Temperature Ranges

Three distinct temperature range configurations accommodate diverse calibration requirements. Range A (-20°C to +150°C) suits most commercial and industrial applications. Range B (-40°C to +150°C) addresses cold storage verification and automotive testing. Range C (-70°C to +150°C) supports aerospace, cryogenic research, and extreme environment simulation. This flexibility eliminates the need for multiple chambers, reducing capital investment and laboratory footprint while maintaining capability breadth.

Comprehensive Turn-Key Solutions

LIB Industry provides complete environmental testing solutions encompassing research, design, manufacturing, commissioning, delivery, installation, and operator training. This integrated approach ensures chambers arrive properly configured, validated, and ready for immediate use. Technical support continues post-installation, with calibration services, preventive maintenance programs, and application assistance available throughout equipment lifecycle.

Conclusion

Temperature calibration chambers form the cornerstone of reliable measurement systems across industries demanding thermal accuracy. Through precise control mechanisms, stable thermal environments, and traceable verification processes, these specialized instruments eliminate measurement uncertainty that could compromise product quality or safety. Investment in quality calibration equipment, combined with proper maintenance and documentation practices, delivers long-term returns through reduced errors, enhanced regulatory compliance, and improved customer confidence in measurement results.

FAQs

What temperature range do calibration chambers typically cover?

Professional calibration chambers offer ranges from -70°C to +150°C, accommodating most industrial verification requirements. Configuration options include -20°C to +150°C for standard applications, -40°C to +150°C for cold environment testing, and -70°C to +150°C for extreme condition simulation. Selection depends on the operating range of instruments requiring calibration.

How often should temperature calibration chambers undergo verification?

Verification frequency depends on usage intensity and accuracy requirements, typically ranging from quarterly to annual intervals. High-utilization laboratories performing critical measurements may require more frequent verification, while occasional users can extend intervals. Risk-based approaches consider consequences of measurement failures when establishing schedules that balance cost against quality assurance needs.

What factors influence temperature uniformity within calibration chambers?

Uniformity depends on insulation quality, air circulation effectiveness, chamber loading, and thermal stabilization time. Centrifugal fans create forced convection that homogenizes temperature throughout the workspace. Proper sensor placement verifies spatial consistency. Loading test specimens alters thermal mass and disrupts airflow patterns, necessitating adequate equilibration periods before measurements commence to ensure accuracy.

LIB Industry stands as a leading temperature calibration chamber manufacturer and supplier, delivering turn-key environmental testing solutions worldwide. Our chambers guarantee measurement accuracy through proven technology and comprehensive support services. Contact our team at ellen@lib-industry.com to explore how our calibration chambers can elevate your quality assurance protocols.

Accelerated Shelf Life Testing Equipment for Food and Pharmaceuticals

Accelerated Shelf Life Testing Equipment for Food and Pharmaceuticals

Accelerated shelf life testing equipment provides manufacturers with predictive insights into product degradation patterns without waiting months or years for real-time results. These sophisticated environmental chambers simulate extreme storage conditions - elevated temperatures, controlled humidity levels, and cyclic stress - to compress years of natural aging into weeks or months. By controlling multiple environmental parameters simultaneously, food producers and pharmaceutical companies can validate expiration dates, optimize formulations, and ensure product safety while significantly reducing time-to-market and development costs.

What Is Accelerated Shelf Life Testing and Why Is It Important?

The Scientific Foundation of Accelerated Aging Studies

Accelerated shelf life testing applies the Arrhenius equation principle, which states that chemical reaction rates approximately double with every 10°C temperature increase. This fundamental relationship allows scientists to predict long-term stability by exposing products to intensified environmental stress. The methodology transforms what would require 24 months of real-time observation into 3-6 months of accelerated conditions, providing manufacturers with actionable stability data while products are still in development phases.

Critical Business Implications for Manufacturers

The financial impact of inaccurate shelf life predictions extends beyond simple waste calculations. Underestimated expiration dates result in premature product disposal, damaged brand reputation, and potential legal liabilities. Conversely, overstated shelf life claims expose consumers to degraded products, triggering regulatory penalties and market recalls. Accelerated testing equipment bridges this uncertainty gap, delivering empirical evidence that supports confident labeling decisions and protects both public health and corporate assets.

Regulatory Compliance and Market Access Requirements

Global regulatory bodies mandate stability testing before product commercialization. The FDA requires pharmaceutical manufacturers to demonstrate potency retention throughout labeled shelf life, while food safety authorities demand microbial stability verification. Accelerated shelf life testing equipment generates the documentation necessary for regulatory submissions, including stability protocols, trending analysis, and degradation rate calculations that satisfy international standards across multiple jurisdictions.

Principles Behind Temperature and Humidity Stress Testing

Thermodynamic Effects on Molecular Degradation

Elevated temperatures accelerate oxidation reactions, enzymatic activity, and molecular breakdown mechanisms that govern product deterioration. In pharmaceutical formulations, heat stress reveals degradation pathways that produce impurities or reduce active ingredient potency. Food matrices experience lipid oxidation, vitamin degradation, and protein denaturation at accelerated rates. Understanding these thermal responses allows formulators to predict stability profiles and establish appropriate storage recommendations for commercial distribution.

Moisture-Mediated Degradation Pathways

Humidity control reveals moisture-sensitive failure modes that plague hygroscopic materials. Water activity influences microbial growth potential, chemical hydrolysis reactions, and physical property changes like caking or crystallization. Pharmaceutical tablets may lose hardness or dissolution characteristics when exposed to elevated moisture, while food products experience texture degradation and accelerated spoilage. Precise humidity regulation within test chambers replicates real-world storage conditions across diverse climatic zones.

Synergistic Environmental Stress Factors

Combined temperature and humidity exposure creates multiplicative degradation effects that single-parameter testing cannot capture. The interaction between thermal energy and moisture availability accelerates complex deterioration mechanisms, revealing formulation weaknesses that might remain hidden under ambient conditions. This synergistic stress approach provides conservative stability estimates that ensure product quality under worst-case distribution and storage scenarios encountered in global supply chains.

Key Parameters Controlled in Shelf Life Test Chambers

|

Parameter |

Typical Range |

Control Precision |

Testing Significance |

|

Temperature |

-86°C to +150°C |

±0.5°C |

Arrhenius acceleration factor |

|

Relative Humidity |

20% to 98% RH |

±2.5% RH |

Moisture sensitivity assessment |

|

Temperature Cycling |

Programmable profiles |

1°C/min cooling, 3°C/min heating |

Thermal stress resistance |

Temperature Range Capabilities and Applications

Modern accelerated shelf life testing equipment offers extraordinary temperature span from deep freeze conditions to high-temperature stress. The -86°C capability supports frozen pharmaceutical stability studies and ultra-cold food storage simulation, while the +150°C upper limit enables heat stress testing for thermally processed foods and temperature-resistant packaging materials. This versatility accommodates diverse product categories within a single testing platform, maximizing laboratory efficiency and capital equipment utilization.

Humidity Control Systems and Precision

Advanced humidification systems maintain stable relative humidity through surface evaporation technology and automatic water supply mechanisms. The 20% to 98% RH range covers arid desert conditions through tropical rainforest environments, enabling manufacturers to validate product performance across global distribution networks. Stainless steel evaporators prevent microbial contamination while providing consistent moisture generation throughout extended testing protocols, ensuring reproducible results across multiple study batches.

Programmable Environmental Profiles

Sophisticated microprocessor controllers enable complex testing sequences that replicate realistic distribution scenarios. Programmable temperature and humidity cycles simulate day-night fluctuations, seasonal transitions, and transportation stress encountered during product logistics. Touch screen interfaces with Ethernet connectivity facilitate remote monitoring, automated data logging, and seamless integration with laboratory information management systems, enhancing operational efficiency and regulatory compliance documentation.

How Accelerated Testing Correlates with Real-Time Stability Data?

Mathematical Models Linking Accelerated and Ambient Conditions

The Q10 method and Arrhenius equation provide mathematical frameworks for extrapolating accelerated test results to ambient storage predictions. These models calculate acceleration factors based on temperature differentials between test conditions and intended storage environments. Correlation coefficients derived from parallel accelerated and real-time studies validate predictive accuracy, enabling manufacturers to establish defensible shelf life claims supported by statistical confidence intervals and scientific precedent.

Validation Through Comparative Studies

Rigorous validation protocols compare accelerated predictions against actual real-time stability data collected over extended periods. These parallel studies identify product-specific degradation mechanisms and confirm that accelerated conditions produce identical failure modes to ambient aging. Successful correlation demonstrates that accelerated testing accurately forecasts long-term performance, while discrepancies reveal complex degradation pathways requiring modified testing approaches or formulation adjustments.

Limitations and Conservative Estimation Practices

Accelerated testing cannot perfectly replicate every aspect of natural aging, particularly for products with complex multi-component interactions or phase transitions at specific temperatures. Conservative shelf life estimation applies safety factors to accelerated predictions, accounting for potential model limitations and biological variability. This approach prioritizes consumer safety while providing manufacturers with reasonable expiration dates supported by empirical evidence and sound scientific methodology.

|

Test Condition |

Acceleration Factor |

Equivalent Ambient Time |

Typical Application |

|

40°C / 75% RH |

4-6x |

6 months = 2-3 years |

Pharmaceutical zone IV climatic conditions |

|

50°C / 20% RH |

8-12x |

3 months = 2-3 years |

Dry food products, supplements |

|

60°C / 90% RH |

15-20x |

2 months = 2.5-3.5 years |

Extreme stress testing, tropical storage |

Regulatory Guidelines: FDA, ICH, and ISO Standards for Shelf Life Studies

FDA Requirements for Pharmaceutical Stability Testing

The FDA guidance documents specify comprehensive stability testing protocols covering storage conditions, sampling frequencies, and analytical testing parameters. Pharmaceutical manufacturers must conduct stability studies at labeled storage conditions plus accelerated conditions to support expiration dating. The regulatory framework requires statistical analysis of trending data, specification limits for degradation products, and justification for proposed shelf life based on worst-case stability profiles observed across representative production batches.

ICH Harmonized Tripartite Guidelines

The International Council for Harmonisation establishes globally recognized stability testing standards through ICH Q1A-Q1F guidelines. These harmonized protocols define climatic zones, recommend specific storage conditions, and establish minimum study durations for new drug applications. ICH guidelines facilitate international product registration by creating consistent stability data expectations across regulatory agencies in the United States, European Union, Japan, and other ICH member regions.

ISO Standards for Environmental Test Chambers

ISO 17025 accreditation requirements govern laboratory competence and equipment calibration procedures for stability testing facilities. Accelerated shelf life testing equipment performance qualification follows ISO standards for temperature uniformity, humidity accuracy, and sensor calibration traceability. Regular verification testing ensures continued compliance with documented performance specifications, maintaining data integrity and regulatory acceptability throughout the equipment operational lifetime.

Selecting the Right Accelerated Shelf Life Testing Equipment for Your Product

Chamber Size and Capacity Planning

Interior volume selection depends on sample quantity requirements, product dimensions, and simultaneous study capacity needs. LIB Industry offers chamber sizes from 100L to 1000L, accommodating everything from small pharmaceutical vial studies to large-scale food packaging evaluations. Adequate chamber capacity ensures proper air circulation around samples while providing flexibility for concurrent testing programs without compromising environmental uniformity or temperature stability across the testing space.

Temperature Range Matching to Application Needs

Product-specific stability protocols dictate necessary temperature capabilities. Frozen food products require chambers with -40°C or -70°C capabilities, while heat-stressed pharmaceutical formulations demand reliable performance at elevated temperatures. The TH-500 model provides temperature ranges from -86°C to +150°C, supporting diverse testing requirements within a single platform. Selecting appropriate temperature specifications prevents equipment limitations from constraining future research directions or product portfolio expansion.

Advanced Features Enhancing Testing Capabilities

Modern accelerated shelf life testing equipment incorporates sophisticated features beyond basic temperature and humidity control. French TECUMSEH compressor technology delivers reliable mechanical refrigeration with environmental refrigerant compliance. Programmable color LCD touchscreen controllers enable intuitive operation and complex protocol programming. Automatic water supply systems with purification maintain consistent humidification without operator intervention. These advanced capabilities enhance testing precision, operational convenience, and long-term reliability for demanding laboratory applications.

|

Model |

Internal Dimension |

Volume |

Temperature Range Options |

Ideal Application |

|

TH-100 |

400×500×500 mm |

100L |

-20°C to -70°C options |

Research labs, small batch testing |

|

TH-500 |

700×800×900 mm |

500L |

-20°C to -86°C options |

Mid-scale pharmaceutical, food studies |

|

TH-1000 |

1000×1000×1000 mm |

1000L |

-20°C to -70°C options |

High-volume production testing |

LIB Industry Accelerated Shelf Life Testing Equipment: Reliable Product Lifecycle Data

Precision Engineering for Reproducible Results

LIB Industry accelerated shelf life testing equipment achieves ±0.5°C temperature fluctuation and ±2.5% RH humidity deviation, delivering the measurement precision essential for defensible stability data. French TECUMSEH compressor technology provides mechanical compression refrigeration with exceptional reliability and energy efficiency. Nichrome heating elements enable rapid temperature ramping at 3°C per minute, minimizing transition times between testing phases. This precision engineering ensures reproducible environmental conditions across extended study durations.

Comprehensive Quality and Validation Support

Each LIB chamber includes factory performance qualification documentation, calibration certificates traceable to national standards, and comprehensive operational protocols. The 36-month warranty coverage reflects manufacturing confidence in equipment durability and long-term reliability. Preventive maintenance contracts provide regular servicing and annual calibration, maintaining validated status throughout the equipment lifecycle. Onsite training ensures laboratory personnel understand proper operation, routine maintenance procedures, and troubleshooting techniques for uninterrupted testing operations.

Global Technical Support and Application Expertise

LIB Industry provides worldwide technical support addressing operational questions, protocol development assistance, and equipment troubleshooting. Application specialists collaborate with customers to optimize testing strategies, interpret stability data, and navigate regulatory requirements across different geographical markets. This comprehensive support infrastructure transforms equipment purchase into a long-term partnership, ensuring customers extract maximum value from their stability testing investments while maintaining regulatory compliance and scientific rigor.

Conclusion

Accelerated shelf life testing equipment represents an indispensable tool for modern food and pharmaceutical development, compressing years of aging analysis into manageable timeframes while maintaining scientific validity and regulatory acceptability. The sophisticated environmental control capabilities offered by advanced chambers enable manufacturers to predict product performance, optimize formulations, and establish confident expiration dating supported by empirical evidence. As regulatory scrutiny intensifies and global competition demands faster product launches, investment in reliable accelerated testing infrastructure becomes essential for maintaining market position and ensuring consumer safety.

FAQs

How long does accelerated shelf life testing typically require compared to real-time studies?

Accelerated testing typically compresses 24-36 months of ambient storage into 3-6 months of elevated temperature and humidity exposure. The exact acceleration factor depends on product characteristics and selected test conditions, with typical multipliers ranging from 4x to 20x based on temperature differential and degradation mechanisms.

Can accelerated testing completely replace real-time stability studies for regulatory submissions?

Accelerated studies support initial shelf life estimates and expedite product launches, but regulatory agencies typically require ongoing real-time stability confirmation studies. These parallel programs validate accelerated predictions and provide long-term trending data demonstrating continued product quality throughout the proposed expiration period under actual storage conditions.

What chamber specifications are most critical for pharmaceutical stability testing compliance?

Temperature uniformity within ±2.0°C, humidity precision of ±2.5% RH, and documented calibration traceability represent essential specifications for pharmaceutical applications. Additionally, programmable controllers enabling ICH-compliant temperature and humidity profiles, automated data logging capabilities, and alarm systems alerting operators to environmental excursions ensure regulatory compliance and data integrity.

Ready to enhance your product stability testing capabilities? LIB Industry, a leading accelerated shelf life testing equipment manufacturer and supplier, delivers precision-engineered environmental chambers backed by comprehensive technical support and global service infrastructure. Contact our application specialists at ellen@lib-industry.com to discuss your specific testing requirements and discover how our solutions can accelerate your product development timeline.

Understanding IPX9k Waterproof Testing Under IEC 60529

Understanding IPX9k Waterproof Testing Under IEC 60529

IPX9k waterproof testing represents the most stringent level of liquid ingress protection, specifically designed to evaluate equipment against extreme high-pressure and high-temperature water jets. This testing, conducted using IEC 60529 IPX9k equipment, has become increasingly critical across automotive, industrial machinery, and food processing sectors where equipment faces intensive cleaning protocols. Originally developed for road vehicles requiring regular intensive cleaning - such as dump trucks and concrete mixers - the standard has expanded into diverse applications. Understanding the technical parameters, testing methodologies, and compliance requirements enables manufacturers to design products that withstand the harshest environmental challenges while meeting international regulatory standards.

What Does the IPX9k Rating Mean in IEC 60529?

Decoding the IP Code Structure

The Ingress Protection rating system uses a two-digit classification where the first digit indicates solid particle protection and the second addresses liquid ingress resistance. The "X" designation in IPX9k signifies that dust protection testing was not conducted or is not applicable, while the "9k" denotes the highest level of water protection against pressurized, high-temperature spray applications.

The "K" Designation Significance

The "K" suffix specifically references water pressure requirements, distinguishing this rating from standard IP ratings by emphasizing enhanced pressure specifications. This designation originated from German automotive standards and was later incorporated into international protocols. The "K" variants include 4K, 6K, and 9K, each maintaining strict water pressure criteria.

Standard Evolution and Current Framework

IPX9k testing was initially established under DIN 40050-9, which extended the IEC 60529 rating system, and has since been superseded by ISO 20653:2013 for road vehicles. The standard addresses specific industry needs where conventional waterproofing proves inadequate. Modern certification requires comprehensive documentation demonstrating compliance with temperature, pressure, flow rate, and duration parameters.

High-Pressure and High-Temperature Water Jet Principles

Pressure Parameters and Their Impact

IPX9k testing requires water pressure ranging from 8000 to 10000 kPa (80-100 bar), creating significant mechanical stress on enclosure seals and joints. This pressure level simulates industrial steam cleaning equipment and automated wash systems. The sustained pressure exposes potential vulnerabilities in gasket compression, seal integrity, and material fatigue that lower-rated tests cannot reveal.

Temperature Requirements and Material Stress

Test water must be maintained at +80°C to +88°C, creating thermal expansion challenges for different materials within the enclosure assembly. Hot water reduces viscosity, potentially allowing penetration through microscopic gaps that cold water cannot access. Combined thermal and mechanical stress tests the product under realistic operational conditions where temperature fluctuations occur during cleaning cycles.

Flow Rate and Distribution Dynamics

The water flow rate specification of 14-16 liters per minute ensures consistent spray intensity throughout testing. Flow rate directly correlates with the kinetic energy impacting the enclosure surface. Proper calibration prevents test variations that could produce inconsistent results across different testing facilities.

Equipment Design Requirements for IPX9k Testing

Testing Chamber Configuration

IEC 60529 IPX9k equipment typically features an enclosed chamber constructed from corrosion-resistant materials. The R9K-1200 model includes a 1000-liter internal volume with 1000×1000×1000mm internal dimensions, providing adequate space for various specimen sizes. The chamber incorporates observation windows with double-layer insulating glass and wipers for monitoring test progress without interrupting procedures.

Spray Nozzle Specifications and Positioning

Test nozzles must be positioned at a distance of 100-150mm from the specimen surface, ensuring proper pressure delivery without creating artificial stress concentrations. The system employs four spray nozzles configured to deliver water at precise angles: 0°, 30°, 60°, and 90°. Each angular position receives 30 seconds of exposure, totaling 120 seconds per complete test cycle.

Rotating Platform and Sample Mounting

The testing platform rotates at 5±1 revolutions per minute, exposing all specimen surfaces to the water jets. Platform diameter typically measures 600mm with adjustable height ranging from 200-400mm to accommodate various product dimensions. Proper specimen mounting prevents movement during rotation while ensuring complete surface exposure.

|

Component |

Specification |

Purpose |

|

Water Pressure |

8000-10000 kPa |

Simulates industrial cleaning pressure |

|

Water Temperature |

80-88°C |

Tests thermal expansion effects |

|

Flow Rate |

14-16 L/min |

Ensures consistent spray intensity |

|

Nozzle Distance |

100-150 mm |

Maintains proper pressure delivery |

|

Platform Speed |

5±1 rpm |

Exposes all surfaces uniformly |

|

Test Duration |

30s per angle |

Provides adequate exposure time |

How Test Conditions Differ from IPX5 and IPX6 Standards?

Pressure Differential Analysis

IPX5 testing uses approximately 30 kPa water pressure delivered through a 6.3mm nozzle, while IPX6 increases to 100 kPa through a 12.5mm nozzle. IPX9k dramatically escalates pressure to 8000-10000 kPa - roughly 80-100 times greater than IPX6. This exponential increase creates fundamentally different mechanical stress patterns on enclosure materials and sealing systems.

Temperature Parameter Comparisons

Standard IPX5 and IPX6 tests utilize ambient temperature water, typically 15-35°C depending on facility conditions. IPX9k's requirement for 80-88°C water introduces thermal stress absent in lower ratings. Hot water affects elastomer seals, adhesive bonds, and thermal expansion coefficients, revealing vulnerabilities that room-temperature testing cannot identify.

Testing Duration and Coverage

IPX5 and IPX6 tests require minimum one minute per square meter of enclosure surface area, with water applied from specific angles. IPX9k testing prescribes 30 seconds at each of four angular positions (0°, 30°, 60°, 90°) while the specimen rotates continuously. This methodology ensures complete surface coverage under maximum stress conditions.

|

Rating |

Pressure (kPa) |

Temperature (°C) |

Flow Rate (L/min) |

Application |

|

IPX5 |

30 |

Ambient (15-35) |

12.5 |

Moderate water jets |

|

IPX6 |

100 |

Ambient (15-35) |

100 |

Powerful water jets |

|

IPX9k |

8000-10000 |

80-88 |

14-16 |

High-pressure steam cleaning |

Industries and Applications Requiring IPX9k Certification

Automotive and Transportation Sectors

Heavy-duty vehicles including dump trucks, concrete mixers, and refuse collection vehicles require IPX9k certification due to regular intensive cleaning. To validate compliance, testing with IEC 60529 IPX9k equipment is essential, ensuring that engine compartments, electrical connectors, and sensor housings can withstand automated wash systems operating at industrial pressures and temperatures. Electric vehicle battery enclosures increasingly demand this protection level as manufacturers expand into commercial fleet applications.

Food Processing and Pharmaceutical Equipment

Manufacturing facilities in food and pharmaceutical industries implement stringent sanitation protocols involving high-pressure, high-temperature washdowns between production runs. Processing equipment, control panels, and motor housings require IPX9k protection to prevent contamination while maintaining operational integrity. Stainless steel enclosures with IPX9k-rated sealing systems enable compliance with FDA and HACCP requirements.

Industrial Machinery and Marine Applications

Car wash systems, outdoor equipment manufacturing, and marine vessels utilize IPX9k-rated components exposed to harsh cleaning regimens. Construction equipment operating in mining, tunneling, and quarrying environments undergoes frequent decontamination using industrial pressure washers. Marine electronics protecting navigation and communication systems benefit from IPX9k certification against storm conditions and deck cleaning operations.

Compliance and Documentation for IPX9k Waterproof Tests

Required Testing Parameters and Measurements

Comprehensive test reports must document water pressure readings, temperature measurements, flow rate verification, and nozzle distance confirmations throughout the testing duration. Calibrated instruments require recent certification traceable to national standards. Pre-test and post-test specimen inspections identify any ingress, condensation, or damage resulting from exposure.

Pass/Fail Criteria and Assessment Methods

The equipment under test shall experience no harmful effects from the high-pressure and high-temperature water spray. Acceptance criteria typically permit trace moisture that does not affect product functionality or safety. Internal inspection reveals whether water penetration occurred through seals, vents, or structural gaps. Electrical components undergo insulation resistance testing and functional verification post-exposure.

Certification Bodies and Accreditation Requirements

Third-party testing laboratories holding ISO 17025 accreditation provide internationally recognized IPX9k certification. Documentation includes detailed test protocols, equipment calibration certificates, photographic evidence, and signed test reports. Manufacturers maintain certification records demonstrating continued compliance through periodic retesting or design validation protocols.

|

Documentation Element |

Content Requirements |

Purpose |

|

Test Protocol |

Detailed procedure steps |

Ensures consistency |

|

Calibration Certificates |

Instrument traceability |

Validates measurement accuracy |

|

Pre-Test Inspection |

Baseline condition documentation |

Establishes reference point |

|

Test Parameters |

Pressure, temperature, flow data |

Demonstrates compliance |

|

Post-Test Assessment |

Visual and functional evaluation |

Confirms performance |

|

Certification Report |

Comprehensive results summary |

Provides customer evidence |

LIB Industry IEC 60529 IPX9k Equipment: Precision Testing Solutions

Advanced Testing Chamber Capabilities

LIB Industry's IEC 60529 IPX9k equipment delivers comprehensive IPX9k testing through precision-engineered components and automated control systems. The chamber features SUS304 stainless steel interior construction providing corrosion resistance and mirror-surface finish for easy maintenance. A programmable color LCD touchscreen controller with Ethernet connectivity enables remote monitoring and data logging capabilities.

Integrated Water Management Systems

The equipment incorporates a complete water supply system including storage tank, booster pump, automatic water supply, and purification system. Water recycling capabilities reduce operational costs while maintaining environmental responsibility. The heating system uses nichrome elements controlled through PID algorithms maintaining precise temperature stability throughout testing cycles.

Safety Features and Operational Reliability

Multiple protection systems ensure operator safety and equipment longevity. Over-temperature protection prevents heater damage, while over-current protection safeguards electrical components. Water shortage protection prevents pump operation without adequate supply. Earth leakage protection and phase sequence protection provide comprehensive electrical safety. Electromagnetic door locks controlled through the interface prevent accidental opening during testing.

Customization and Technical Support

LIB Industry provides turnkey solutions encompassing research, design, production, commissioning, delivery, installation, and operator training. Technical specifications can be customized to accommodate specific product dimensions, testing requirements, or industry protocols. The modular design allows for future upgrades as standards evolve or testing needs expand.

Conclusion

IPX9k waterproof testing under IEC 60529 establishes the benchmark for extreme environmental protection certification. Understanding the rigorous pressure, temperature, and exposure requirements enables manufacturers to develop products meeting demanding industry applications. Proper equipment selection, comprehensive testing protocols, and thorough documentation ensure reliable certification outcomes. As industries continue advancing toward more robust product designs, IPX9k testing remains fundamental to quality assurance programs across automotive, food processing, and industrial sectors.

FAQs

What differentiates IPX9k from IP69k rating requirements?